McSfM: Multi-camera Based Incremental Structure-from-Motion

摘要

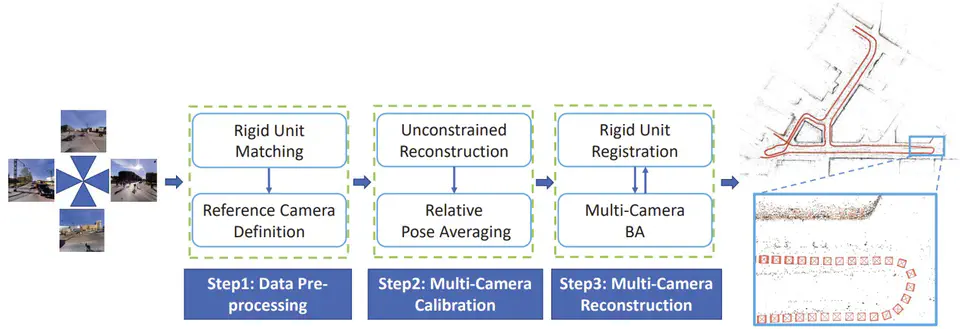

Fully perceiving the surrounding world is a vital capability for autonomous robots. To achieve this goal, a multi camera system is usually equipped on the data collecting platform and the structure from motion (SfM) technology is used for scene reconstruction. However, although incremental SfM achieves high-precision modeling, it is inefficient and prone to scene drift in large-scale reconstruction tasks. In this paper, we propose a tailored incremental SfM framework for multi-camera systems, where the internal relative poses between cameras can not only be calibrated automatically but also serve as an additional constraint to improve the system robustness. Previous multi-camera based modeling work has mainly focused on stereo setups or multi camera systems with known calibration information, but we allow arbitrary configurations and only require images as input. First, one camera is selected as the reference camera, and the other cameras in the multi-camera system are denoted as non reference cameras. Based on the pose relationship between the reference and non-reference camera, the non-reference camera pose can be derived from the reference camera pose and internal relative poses. Then, a two-stage multi-camera based camera registration module is proposed, where the internal relative poses are computed first by local motion averaging, and then the rigid units are registered incrementally. Finally, a multi-camera based bundle adjustment is put forth to iteratively refine the reference camera and the internal relative poses. Experiments demonstrate that our system achieves higher accuracy and robustness on benchmark data compared to the state-of-the-art SfM and SLAM (simultaneous localization and mapping) methods.